The Model Divergence Report

Same question, different AI, different answers. How 8 major AI models disagree on which brands to recommend - and what it means for your visibility strategy.

The Landscape

The Multi-Model Reality

When someone asks ChatGPT, Claude, Gemini, or Perplexity for a product recommendation, they expect consistent answers. But our analysis of 797,644 comparisons reveals a startling truth: AI models agree less than half the time.

This isn't a bug - it's a fundamental characteristic of how different AI systems are trained, what data they've seen, and how they interpret intent. For brands, this means your visibility on ChatGPT tells you nothing about your visibility on Claude.

We analyzed 797,644 valid comparisons across 8 major AI models including Google AI Overviews, revealing patterns that should reshape how you think about AI visibility strategy.

The Bottom Line

Each AI model is its own channel.

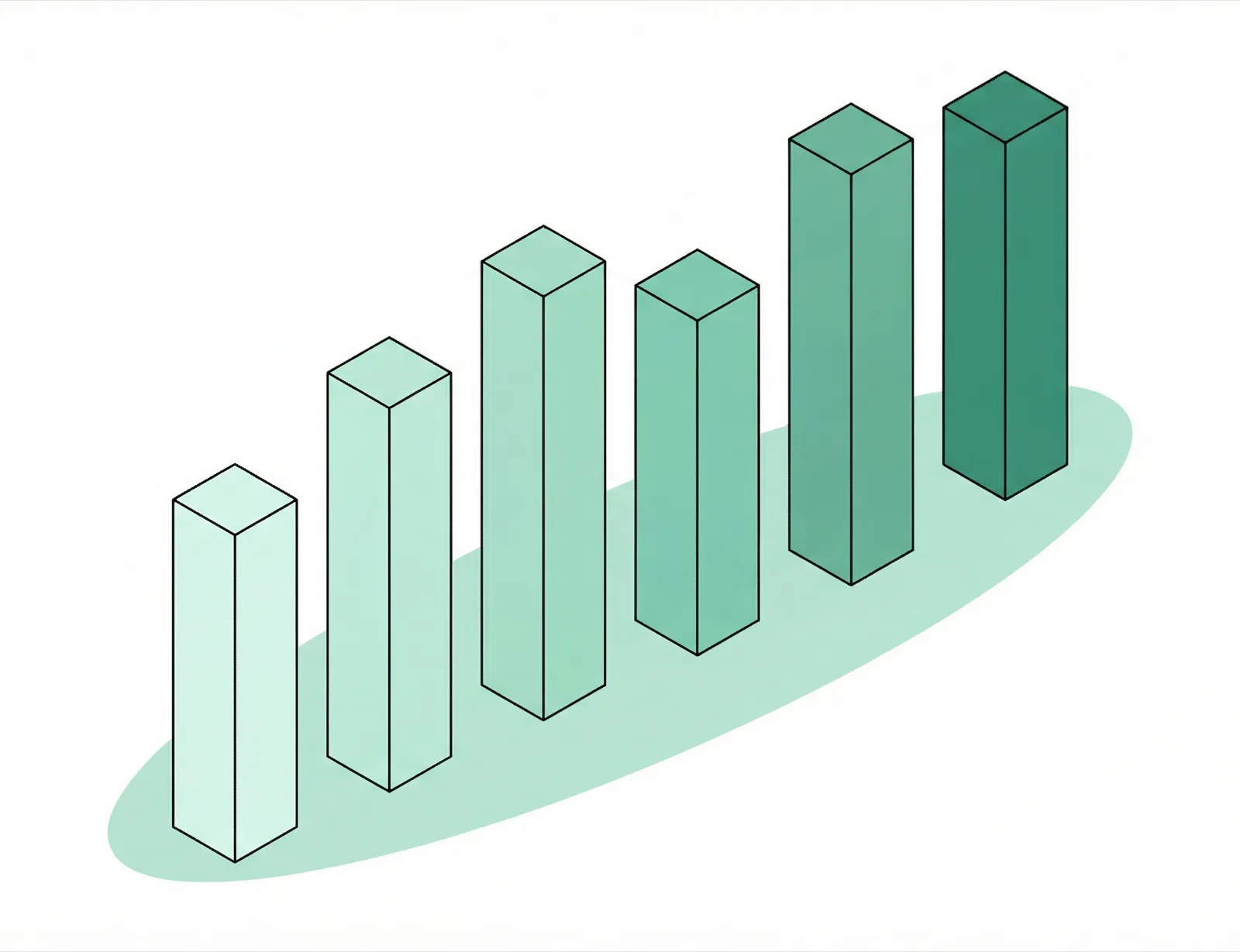

Agreement Distribution

The Agreement Problem

When you ask different AI models the same question, they usually disagree. More than half of all queries (60%) have less than 50% agreement between models on the top brand recommendation.

Only 4.0% of queries achieve perfect consensus - all 8 models recommending the same brand. This is rare, and typically happens only for brands with overwhelming category dominance.

Model Correlations

OpenAI

OpenAI Claude

Claude Gemini

Gemini AIO

AIO Grok

Grok Deepseek

Deepseek Meta

Meta Perplexity

PerplexityModel Clusters Emerge

Some models tend to agree with each other more often, forming implicit clusters. Claude and Deepseek show the highest correlation at 35%.

Claude+

Claude+ Deepseek35%

Deepseek35% Meta+

Meta+ Perplexity10%

Perplexity10%Model Coverage

Not All Models Show Up

Some AI models are more likely to provide brand recommendations than others.Meta appears in 95.0% of queries, while Google AIO only shows up in 56.5%.

This matters because if a model rarely provides recommendations in your category, your visibility strategy for that model may need a different approach.

Meta

Meta AIO

AIO Meta95.0%

Meta95.0% OpenAI85.4%

OpenAI85.4% Grok83.0%

Grok83.0% Gemini82.2%

Gemini82.2% Deepseek80.9%

Deepseek80.9% Claude79.9%

Claude79.9% Perplexity79.4%

Perplexity79.4% AIO56.5%

AIO56.5%Query Types

Comparison Queries = Highest Agreement

When users ask to compare specific brands ("Nike vs Adidas"), models agree 50.4% of the time. But "best of" and general queries cause the most disagreement - exactly the queries where brands have the most opportunity.

This makes sense: comparison queries have clearer context, while open-ended recommendations leave more room for interpretation.

Maximum Divergence

These real examples show complete disagreement - 8 different AI models recommending 8 different brands for the same query. This is the reality brands need to understand.

OpenAI

OpenAI Claude

Claude Gemini

Gemini AIO

AIO Grok

Grok Deepseek

Deepseek Meta

Meta Perplexity

Perplexity OpenAI

OpenAI Claude

Claude Gemini

Gemini AIO

AIO Grok

Grok Deepseek

Deepseek Meta

Meta Perplexity

Perplexity OpenAI

OpenAI Claude

Claude Gemini

Gemini AIO

AIO Grok

Grok Deepseek

Deepseek Meta

Meta Perplexity

PerplexityWhen They Agree

Strong Dominance + Clear Context = Agreement

When ALL 8 models agree on a recommendation, it is typically for queries where:

- •A single brand has overwhelming category dominance

- •The query is highly specific or niche

- •There's clear category definition with limited alternatives

The Playbook

The old playbook is broken.

Optimizing for "AI" as a single channel doesn't work. Each model sees a different web. Here's what that means for your strategy.

Methodology

OpenAI

OpenAI Claude

Claude Gemini

Gemini AIO

AIO Grok

Grok Deepseek

Deepseek Meta

Meta Perplexity

PerplexityHow We Measured Agreement

We analyzed 44,088 brand visibility reports from Trakkr, each containing responses from up to 8 major AI models for the same set of queries.

For each query, we identified the #1 recommended brand from each model, then calculated what percentage of models agreed on the same top brand. A 43.3% average agreement means less than half of models typically agree.

Comparisons were filtered to include only queries where at least 5 models provided a valid brand recommendation, ensuring statistical significance.

See how your brand performs in AI search